Limited Time Offer!

For Less Than the Cost of a Starbucks Coffee, Access All DevOpsSchool Videos on YouTube Unlimitedly.

Master DevOps, SRE, DevSecOps Skills!

Apache tuning is easy to talk about and surprisingly easy to get wrong.

A lot of server guides stop at configuration changes. They tell you to switch MPMs, enable HTTP/2, turn on TLS, add compression, maybe move PHP behind PHP-FPM, and call it “optimized.” The hard part is not changing the config. The hard part is proving that the server is actually healthier after those changes.

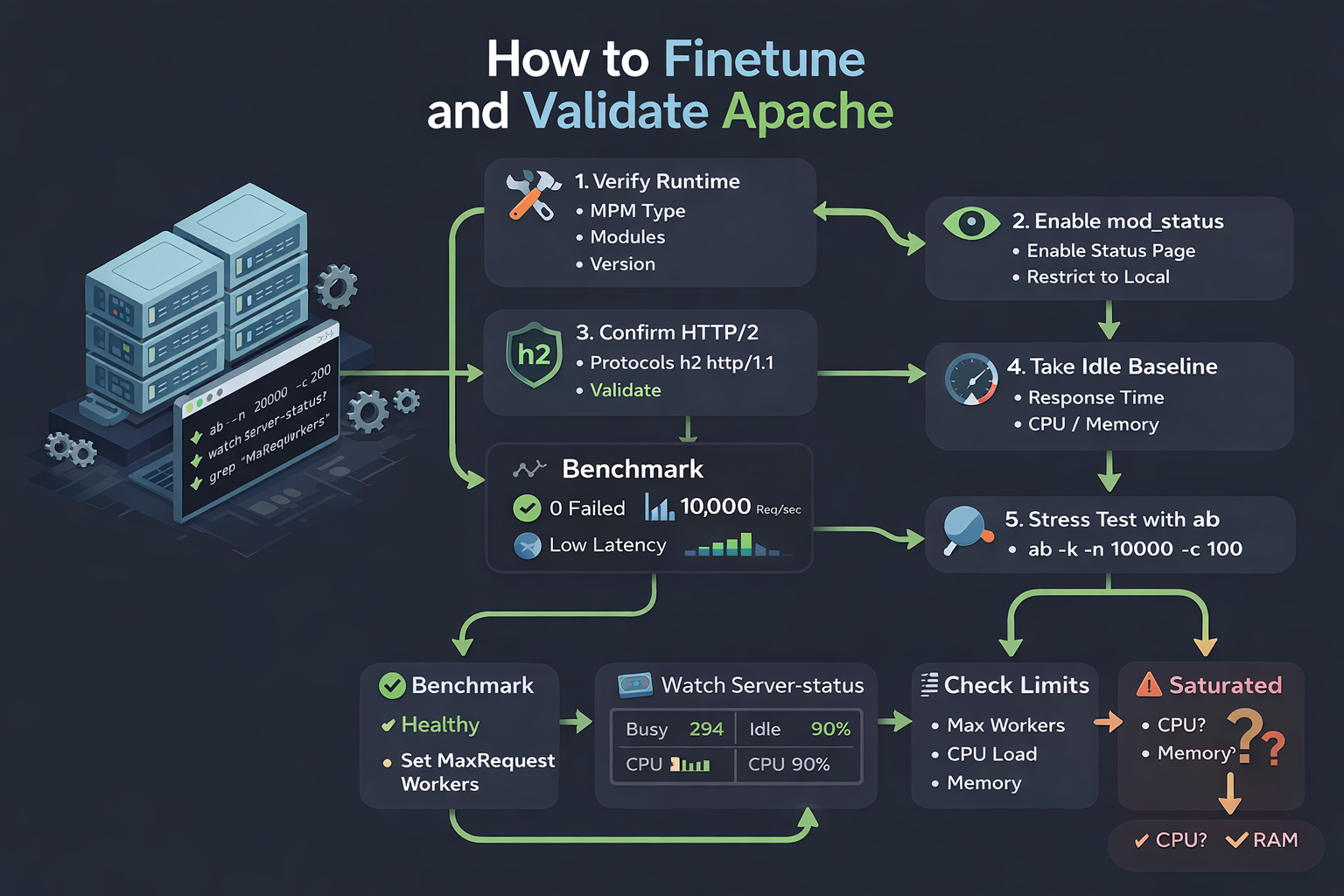

This article turns a practical troubleshooting and tuning session into a full workflow you can use on your own Ubuntu server. The setup started with a simple HTML site hosted on Apache, then moved through real validation: checking the active MPM, confirming the right modules were loaded, enabling mod_status, verifying HTTP/2, running ab benchmarks, investigating response-length anomalies, checking whether the site content was actually stable, and finally reading Apache’s live worker data during load.

The outcome is the part most people skip: not just “Apache is tuned,” but “Apache has been measured, stress-tested, and interpreted correctly.”

Why testing matters more than tuning

Apache’s own documentation makes this point indirectly across several places. The event MPM is designed to handle more simultaneous requests by offloading some connection work asynchronously, mod_status exists specifically to observe how the server is performing, and Apache’s performance guidance emphasizes that memory pressure and swapping are often the real limits rather than some single magic directive. In other words, tuning is only half the job. Measurement is the other half.

That is why the best way to validate an Apache setup is to start with the simplest possible workload. A static HTML page removes PHP, database latency, framework overhead, and cache behavior from the equation. If the server still struggles with a static page, the issue is almost certainly in Apache, TLS, the network stack, the machine itself, or the way the test is being run.

The starting point: a real Apache server on Ubuntu

In the session behind this article, the server had already been tuned at a high level and a simple HTML page had been deployed for validation. The next step was to answer a practical question:

How do you actually test whether Apache is healthy after tuning performance, concurrency, memory, CPU usage, security, and best practices?

The first verification step was to confirm what Apache was really running.

apachectl -t

apachectl -V | grep -i 'server mpm'

apachectl -M | egrep 'status|http2|ssl|proxy|headers'

apache2 -v

The output showed:

Syntax OKServer MPM: event- Loaded modules included

headers_module,http2_module,proxy_module,proxy_fcgi_module,ssl_module, andstatus_module - Apache version was

Apache/2.4.58 (Ubuntu)

That is a strong baseline. It tells you Apache is not only configured correctly, but also running the modern pieces you expect on a production-oriented stack.

Step 1: Verify the Apache runtime before benchmarking

When you benchmark a server, you need to know exactly what you are benchmarking. The MPM matters because Apache’s process and thread model changes depending on whether you are using prefork, worker, or event. Apache documents MPMs as the layer responsible for binding network ports, accepting requests, and dispatching server workers. The event MPM in particular is threaded like worker, but is designed to serve more simultaneous requests by freeing worker threads from some connection-handling tasks.

That distinction matters in real testing. If you are on event, then higher concurrency may be handled more efficiently than on a process-heavy prefork setup. If you are still on prefork, results under load may look very different, especially on memory-constrained systems. Apache also notes that threaded MPMs can help handle more simultaneous connections and mitigate some denial-of-service pressure compared with one-process-per-connection approaches.

So before you run any load tests, always answer these questions first:

- Is the config syntactically valid?

- Which MPM is active?

- Which performance and transport modules are loaded?

- Are you benchmarking static files, PHP-FPM, reverse proxying, or something else?

Without these answers, benchmark numbers are just noise.

Step 2: Turn on mod_status and keep it private

One of the most useful things Apache ships with is mod_status. It provides a status page that shows current server statistics and also exposes a simple machine-readable format using ?auto. Apache explicitly describes it as a way for an administrator to find out how well the server is performing.

A safe minimal configuration looks like this:

ExtendedStatus On

<Location /server-status>

SetHandler server-status

Require local

</Location>

Then reload Apache:

sudo systemctl reload apache2

# or

sudo apachectl -k graceful

Test it locally:

curl http://127.0.0.1/server-status?auto

The important phrase there is Require local. The status handler is extremely useful, but it should not be exposed to the public internet during routine operation. Apache’s status documentation and security guidance both make it clear that operational visibility is powerful, which also means it must be controlled. Apache’s security tips also recommend protecting resources and tuning connection capacity carefully so you can handle legitimate traffic without exhausting system resources.

Step 3: Confirm HTTP/2 is not just installed, but actually enabled

Having http2_module loaded is not the same thing as serving HTTP/2 traffic. Apache’s HTTP/2 guide and module documentation explain that HTTP/2 is enabled through the Protocols directive, commonly with settings such as Protocols h2 http/1.1 for TLS sites. Apache’s guide also notes that most browsers use HTTP/2 over HTTPS, which is the normal case for public websites.

The server in this session showed:

grep -R "Protocols" /etc/apache2

Output:

/etc/apache2/mods-enabled/http2.conf:Protocols h2 h2c http/1.1

/etc/apache2/mods-available/http2.conf:Protocols h2 h2c http/1.1

This means the server was configured for:

h2— HTTP/2 over TLSh2c— cleartext HTTP/2http/1.1— normal fallback

For a normal public HTTPS website, h2 http/1.1 is usually the cleaner choice unless you intentionally want cleartext HTTP/2 support. Apache documents both forms and makes clear that HTTP/2 is production-ready and configured through Protocols.

To verify actual protocol negotiation, use:

curl -I --http2 https://yourdomain.com

curl -s -o /dev/null -w 'http_version=%{http_version}\n' --http2 https://yourdomain.com

If you get http_version=2, you are really serving HTTP/2.

Step 4: Establish an idle baseline before generating load

One of the easiest mistakes in server tuning is benchmarking without first understanding idle behavior. You need to know what the server looks like when it is doing almost nothing.

Useful baseline commands include:

curl -o /dev/null -s -w 'connect=%{time_connect} ttfb=%{time_starttransfer} total=%{time_total}\n' https://yourdomain.com/

free -m

vmstat 1 5

top -H -p $(pgrep -d',' apache2)

curl -s http://127.0.0.1/server-status?auto | egrep 'BusyWorkers|IdleWorkers|ReqPerSec|BytesPerSec|CPULoad'

Apache’s performance tuning guidance is especially relevant here. The project notes that RAM is often the key scaling factor and explicitly warns against sizing the server in a way that causes swapping. It also points administrators toward observing real process sizes and real workload behavior instead of assuming fixed rules will fit every machine.

At idle, you want to see low CPU, healthy free memory, zero swap pressure, and plenty of idle workers.

Step 5: Use ab correctly and understand what it is actually measuring

ApacheBench, or ab, is one of the simplest ways to benchmark an Apache server. Apache describes it as a tool designed to give you an impression of how your current Apache installation performs, especially how many requests per second it can serve. That is exactly what makes it useful for a first-pass validation.

A simple benchmark ladder for a static page looks like this:

ab -n 1000 -c 10 https://yourdomain.com/

ab -k -n 5000 -c 50 https://yourdomain.com/

ab -k -n 10000 -c 100 https://yourdomain.com/

ab -k -n 20000 -c 200 https://yourdomain.com/

The options matter:

-nis the total number of requests-cis concurrency-kenables keep-alive

What ab is very good at is fast, repeatable baseline testing.

What ab is not good at is representing all of production reality. Apache’s own documentation warns that ab does not fully implement HTTP/1.x and can end up measuring the tool itself rather than the server, especially under some conditions. That warning is important and often ignored. It means you should treat ab as a useful benchmark tool, not an oracle.

Step 6: Real benchmark results from the session

The first round of benchmarks against the static HTML page produced these results.

At concurrency 10:

- 1000 complete requests

- 0 failed requests

- about 758 requests/sec

- mean time per request about 13.2 ms

- 95th percentile around 17 ms

- maximum around 27 ms

At concurrency 50:

- 5000 complete requests

- 0 failed requests

- about 9874 requests/sec

- mean time per request about 5.1 ms

- 95th percentile around 7 ms

- maximum around 61 ms

At concurrency 100:

- 10000 complete requests

- 1 failed request

- about 9110 requests/sec

- mean time per request about 11 ms

- 95th percentile around 14 ms

- maximum around 129 ms

At concurrency 200:

- 20000 complete requests

- 49 failed requests

- about 8940 requests/sec

- mean time per request about 22 ms

- 95th percentile around 28 ms

- maximum around 230 ms

At first glance, that looked like Apache might be struggling at higher concurrency. But the nature of the failures mattered.

The errors were not connection failures, receive failures, or exceptions. They were Length failures.

That is a very specific clue.

Step 7: Investigate “Length” failures before blaming Apache

ApacheBench defines Document Length based on the first successful response. If later responses do not match that length, it counts them as failures. Apache’s documentation also provides the -l option specifically to accept variable document length. This matters for dynamic pages, compression differences, and some response variations.

The immediate question was simple:

Was the server really returning different content, or was ab being overly strict?

To answer that, the page was downloaded 20 times and compared byte-for-byte.

mkdir -p /tmp/abcheck

for i in {1..20}; do

curl -sk https://urologyhospitals.com/ -o /tmp/abcheck/page_$i.html

done

wc -c /tmp/abcheck/page_*.html

sha256sum /tmp/abcheck/page_*.html

The result was clean:

- every file was exactly

37519bytes - every file had the same SHA-256 hash

That proved the page content itself was stable.

Then headers were checked:

curl -skI https://urologyhospitals.com/ | egrep -i 'content-length|content-encoding|vary|cache-control|etag|last-modified'

Returned headers included:

last-modifiedetagcontent-length: 37519vary: Accept-Encoding

That is a normal enough response for a static asset-backed page. The key point is that the page was not actually changing.

So the next step was to rerun the benchmark with -l.

ab -l -k -n 10000 -c 100 https://urologyhospitals.com/

ab -l -k -n 20000 -c 200 https://urologyhospitals.com/

Results:

At concurrency 100 with -l:

- 10000 complete requests

- 0 failed requests

- about 10237 requests/sec

- median around 8 ms

- 95th percentile around 12 ms

- maximum around 127 ms

At concurrency 200 with -l:

- 20000 complete requests

- 0 failed requests

- about 9482 requests/sec

- median around 17 ms

- 95th percentile around 27 ms

- maximum around 230 ms

That changed the interpretation completely.

The earlier “failures” were not proof that Apache was broken under load. They were benchmark accounting artifacts. This aligns well with Apache’s own caveat that ab is a useful indicator but has protocol limitations and can sometimes reflect tool behavior as much as server behavior.

Step 8: Read the benchmark like an engineer, not like a scoreboard

Once the Length noise was removed, the benchmark told a more realistic story.

The server was healthy.

Throughput flattened around roughly 9.5k to 10.2k requests per second in this same-host benchmark. That flattening is normal. Very few systems scale linearly forever. The important signal was that failures disappeared, response times remained low for a static HTML page, and the server did not fall apart under concurrency 200.

What did change at higher concurrency was tail latency.

That is worth paying attention to. At concurrency 200, the median stayed respectable, but the 99th percentile and the longest requests were noticeably higher. This is how real systems usually behave when they are still functioning well but are starting to accumulate contention somewhere in the stack: TLS, kernel socket scheduling, worker contention, CPU bursts, or simply the benchmark client itself.

This is where many teams stop too early. They see “0 failed requests” and call the system done. A better reading is:

- 50 concurrency looked very comfortable

- 100 concurrency looked strong

- 200 concurrency still worked, but tail latency was clearly rising

That is not failure. It is the beginning of the server’s stress curve.

Step 9: Use mod_status to observe live worker behavior

Load tests only tell part of the story. You also need to know what Apache itself believes is happening during the run.

That is exactly what mod_status is for. Apache documents the machine-readable status page as a source of current server statistics and exposes fields such as worker counts, request rate, bytes per second, and CPU load.

A useful live watch command is:

watch -n 1 "curl -s http://127.0.0.1/server-status?auto | egrep 'BusyWorkers|IdleWorkers|ReqPerSec|BytesPerSec|CPULoad|Scoreboard'"

A later snapshot from the session showed:

CPULoad: 1.90433ReqPerSec: 42.6679BytesPerSec: 1610370BusyWorkers: 6IdleWorkers: 144

That is a healthy server, not a saturated one.

If Apache had been hitting a worker ceiling, you would expect IdleWorkers to fall toward zero while BusyWorkers stayed very high. Instead, the server still had abundant idle worker capacity in the sampled moment.

This is consistent with how the event MPM is intended to behave. It is specifically designed to avoid tying up all worker threads on slow or idle connection states and to serve more simultaneous requests by handling some connection states asynchronously.

Step 10: Understand why a one-second watch can miss a two-second benchmark peak

One subtle but important detail in this session was timing.

The ab -l -k -n 20000 -c 200 run finished in about 2.1 seconds. A watch -n 1 loop samples once per second. That means it is very easy to miss the exact peak of the run and capture the server just before or just after the busiest instant.

Apache’s status page also reports averages for some of its fields, which means those numbers do not line up perfectly with a very short ab burst.

So if you want to catch the peak more accurately, use a faster polling loop and a longer benchmark.

For example:

while true; do

date '+%H:%M:%S'

curl -s http://127.0.0.1/server-status?auto | egrep 'BusyWorkers|IdleWorkers|ReqPerSec|BytesPerSec|CPULoad'

sleep 0.2

done

And in another terminal:

ab -l -k -n 100000 -c 200 https://yourdomain.com/

This gives Apache enough time to settle into a real load window and gives you enough samples to catch the interesting part.

Step 11: Watch memory, CPU, and swap while Apache is under load

Apache benchmarking is never complete until you observe the operating system too.

Apache’s performance guidance is direct about this. RAM is a major factor in scalability, and the server should be configured so it does not swap under load. The practical implication is that tuning MaxRequestWorkers or thread counts without watching memory is dangerous.

The core monitoring commands used in this workflow are:

free -m

vmstat 1

top -H -p $(pgrep -d',' apache2)

nproc

What to look for:

- If

IdleWorkersstays high and swap stays at zero, Apache still has room. - If

IdleWorkersdrops near zero, you are approaching a worker limit. - If CPU pegs hard while memory remains comfortable, CPU or TLS overhead may be the bottleneck.

- If memory pressure rises and swapping begins, Apache is oversized for the box and

MaxRequestWorkersis too aggressive.

That last point is especially important. Apache can look “fast” right up until the moment the machine begins to swap, and then latency becomes chaotic. Apache’s own tuning guidance treats avoiding swap as foundational, not optional.

Step 12: Interpret the scoreboard without overthinking it

The Scoreboard line in server-status looks cryptic the first time you see it, but it is extremely useful once you know what it represents.

In practice, a few W entries with many idle positions means a few workers are actively sending replies and most capacity is available. That matched the observed session: a handful of active workers and a very large idle pool.

For day-to-day operations, you do not need to memorize every scoreboard symbol. The most practical interpretation is:

- many waiting or idle positions means capacity is available

- many busy states with very few idle positions means you are near a limit

- a scoreboard that remains heavily busy under sustained load deserves closer attention

When paired with BusyWorkers and IdleWorkers, the scoreboard becomes a quick visual sanity check.

Step 13: Security and operational validation should be part of performance testing

Performance testing is not complete if the server is fast but carelessly exposed.

A few basic checks belong in the same validation session:

curl -I http://yourdomain.com/

curl -I https://yourdomain.com/

curl -I https://yourdomain.com/server-status

grep -R "ProxyRequests" /etc/apache2 /etc/httpd 2>/dev/null

sudo apt update && sudo apt list --upgradable | grep apache2

Why these checks matter:

- HTTP should redirect to HTTPS for public sites.

/server-statusshould not be reachable publicly.ProxyRequestsshould not be enabled unless you intentionally built a forward proxy.- Apache security updates should be applied.

Apache’s proxy documentation and security guidance have long treated open or misconfigured proxy behavior as dangerous, and Ubuntu continues to publish Apache security notices for supported releases. In early 2026 alone, Ubuntu issued security notices and a related regression notice for Apache packages on supported LTS releases. That matters because on Ubuntu, the package version string alone does not always tell the full patch story; distribution packages often receive backported fixes.

That is why “I am on Apache 2.4.58” is not enough by itself. What matters is whether your distribution’s Apache package is current for your release.

Step 14: What this session actually proved

After all the checks, the conclusions were practical and grounded.

The Apache configuration was syntactically correct.

The server was running the event MPM, which is a strong choice for handling simultaneous connections efficiently on a modern setup.

The required modules for a modern HTTPS stack were loaded, including HTTP/2, TLS, headers, proxy support, PHP-FPM proxying, and status reporting.

HTTP/2 was configured globally through Protocols, which is how Apache expects it to be enabled.

The static site content was stable across repeated fetches, and the early Length failures reported by ApacheBench were benchmark artifacts rather than evidence of broken responses. ApacheBench itself documents both the variable-length handling option and the limitations of the tool.

With -l, the static page benchmark showed zero failed requests even at concurrency 200. Throughput remained strong, though tail latency clearly rose at higher concurrency.

The mod_status snapshot that was captured showed the server had a large reserve of idle workers, which means the server was not obviously constrained by MaxRequestWorkers at that moment.

The remaining work for a production-grade conclusion is not “more guessing.” It is longer-duration testing while collecting server-status, CPU, memory, and swap data at the same time.

That is the difference between tuning a server and validating a server.

A practical Apache validation workflow you can reuse

If you want to apply this same method to your own server, use this sequence.

First, verify the runtime:

apachectl -t

apachectl -V | grep -i 'server mpm'

apachectl -M | egrep 'status|http2|ssl|proxy|headers'

apache2 -v

Then turn on local-only server-status:

ExtendedStatus On

<Location /server-status>

SetHandler server-status

Require local

</Location>

Confirm HTTP/2 configuration:

grep -R "Protocols" /etc/apache2

curl -s -o /dev/null -w 'http_version=%{http_version}\n' --http2 https://yourdomain.com

Collect an idle baseline:

curl -o /dev/null -s -w 'connect=%{time_connect} ttfb=%{time_starttransfer} total=%{time_total}\n' https://yourdomain.com/

free -m

vmstat 1 5

top -H -p $(pgrep -d',' apache2)

curl -s http://127.0.0.1/server-status?auto | egrep 'BusyWorkers|IdleWorkers|ReqPerSec|BytesPerSec|CPULoad'

Run progressive load tests:

ab -n 1000 -c 10 https://yourdomain.com/

ab -k -n 5000 -c 50 https://yourdomain.com/

ab -k -n 10000 -c 100 https://yourdomain.com/

ab -k -n 20000 -c 200 https://yourdomain.com/

If you see Length failures, verify content stability and rerun with -l:

mkdir -p /tmp/abcheck

for i in {1..20}; do

curl -sk https://yourdomain.com/ -o /tmp/abcheck/page_$i.html

done

wc -c /tmp/abcheck/page_*.html

sha256sum /tmp/abcheck/page_*.html

ab -l -k -n 10000 -c 100 https://yourdomain.com/

ab -l -k -n 20000 -c 200 https://yourdomain.com/

During longer runs, poll server-status faster:

while true; do

date '+%H:%M:%S'

curl -s http://127.0.0.1/server-status?auto | egrep 'BusyWorkers|IdleWorkers|ReqPerSec|BytesPerSec|CPULoad'

sleep 0.2

done

And always watch the machine:

free -m

vmstat 1

top -H -p $(pgrep -d',' apache2)

That workflow is simple, repeatable, and grounded in how Apache itself exposes performance information.

Final thoughts

The most valuable lesson from this Apache tuning session is not a particular directive or a single benchmark number.

It is the method.

Start with a static page. Verify the runtime. Confirm the MPM. Confirm the modules. Confirm HTTP/2. Expose mod_status safely. Benchmark in stages. Investigate anomalies instead of panicking. Validate whether the content is really changing. Rerun with the correct benchmark options. Watch workers, memory, CPU, and swap while the test is happening. Then decide whether the server is healthy.

That is how you turn Apache tuning from guesswork into engineering.

And once you can do that on a simple HTML page, you are ready for the harder next step: repeating the same process with PHP-FPM, reverse proxying, application code, and database-backed pages, where Apache is only one part of the performance story.